Sonar Legal

As founding engineer, took Sonar Legal from concept to production-ready legal AI assistant. Built sophisticated LLM integrations, agentic workflows, and enterprise-grade infrastructure serving law firms with intelligent document analysis, research, and editing capabilities.

Overview

From Zero to Production: Building Legal AI

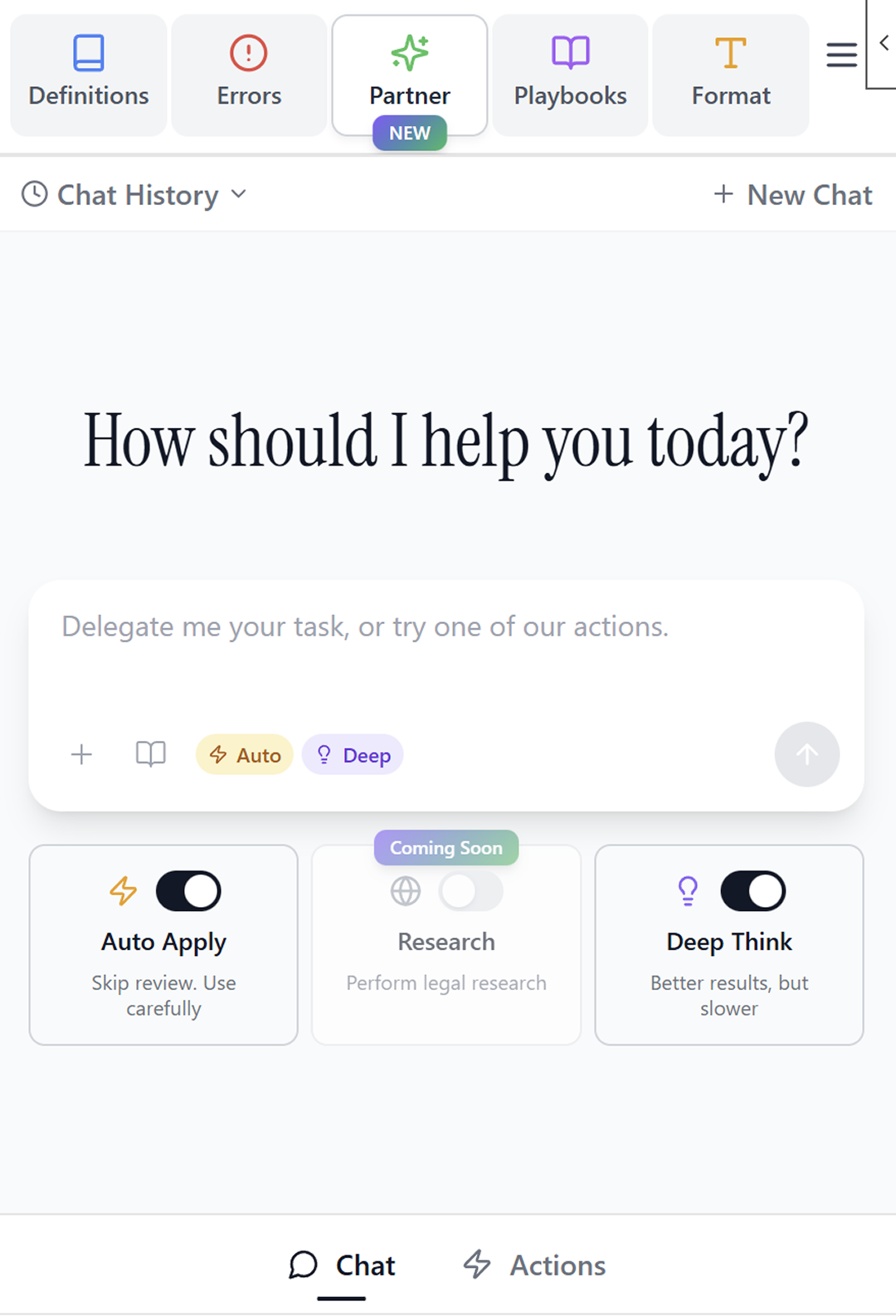

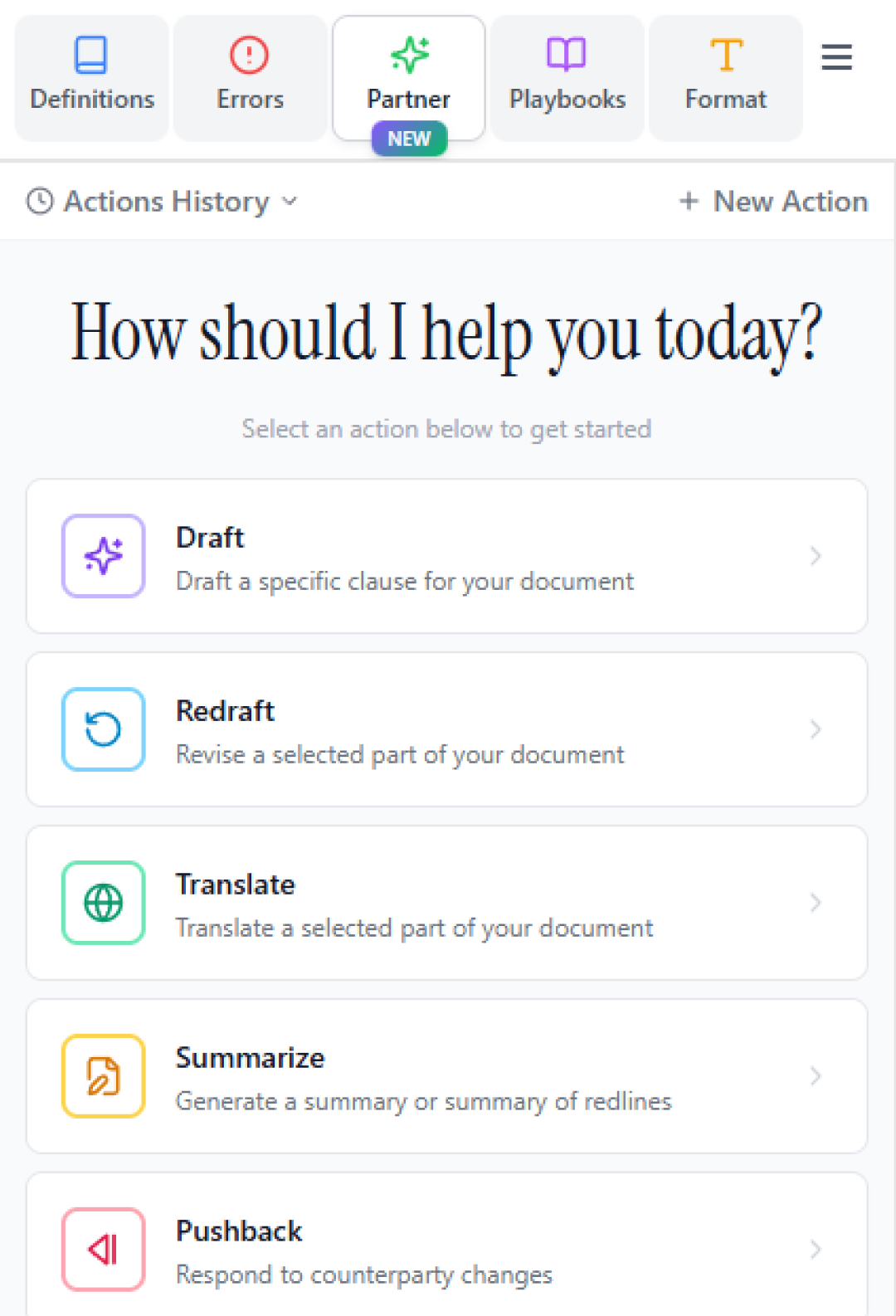

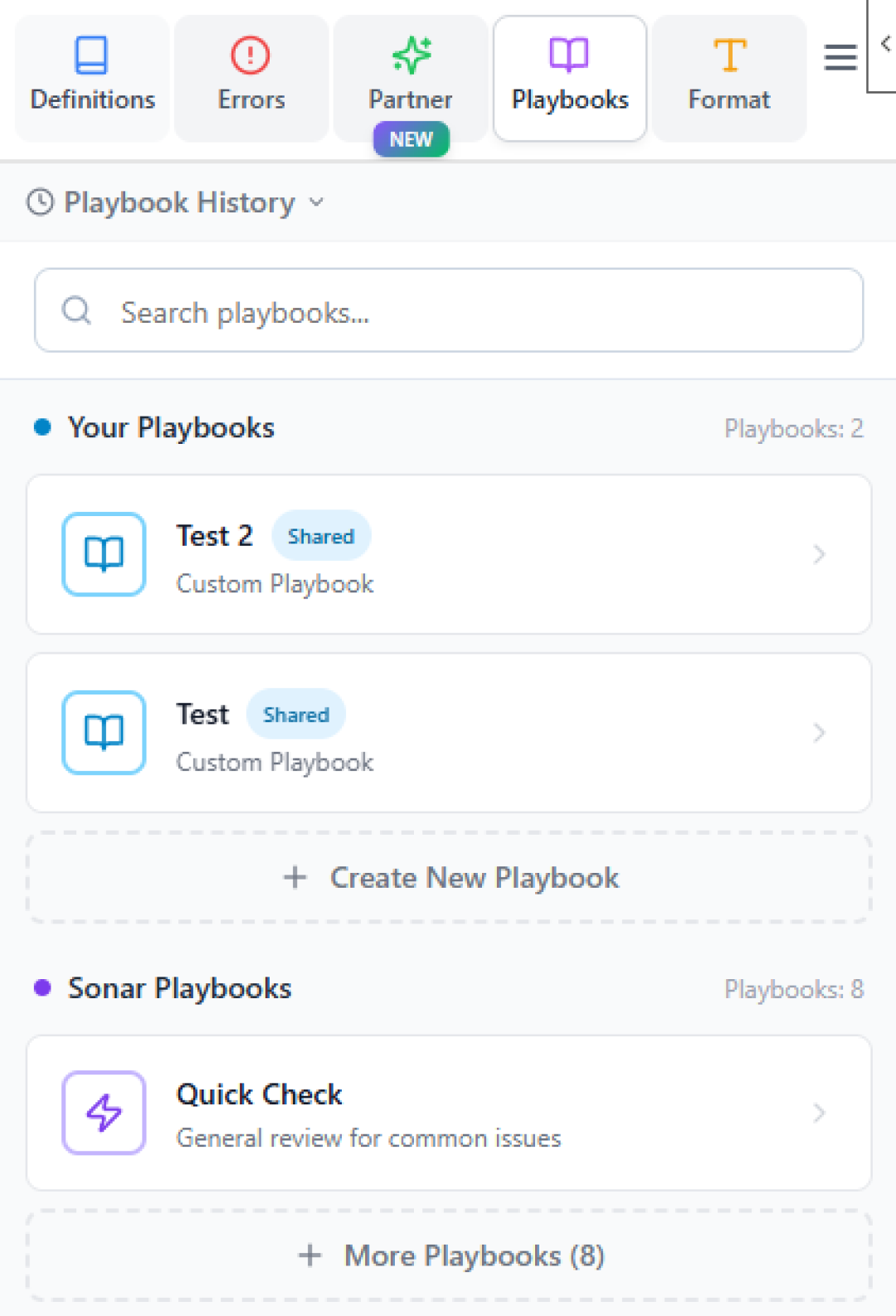

I joined Sonar Legal as the founding engineer with a clear mission: transform legal workflows with AI. Starting from nothing but an idea, I architected and built a production-ready legal AI assistant for Microsoft Office that serves law firms with sophisticated document analysis, agentic editing, legal research, automatic formatting, and playbook-based document reviews.

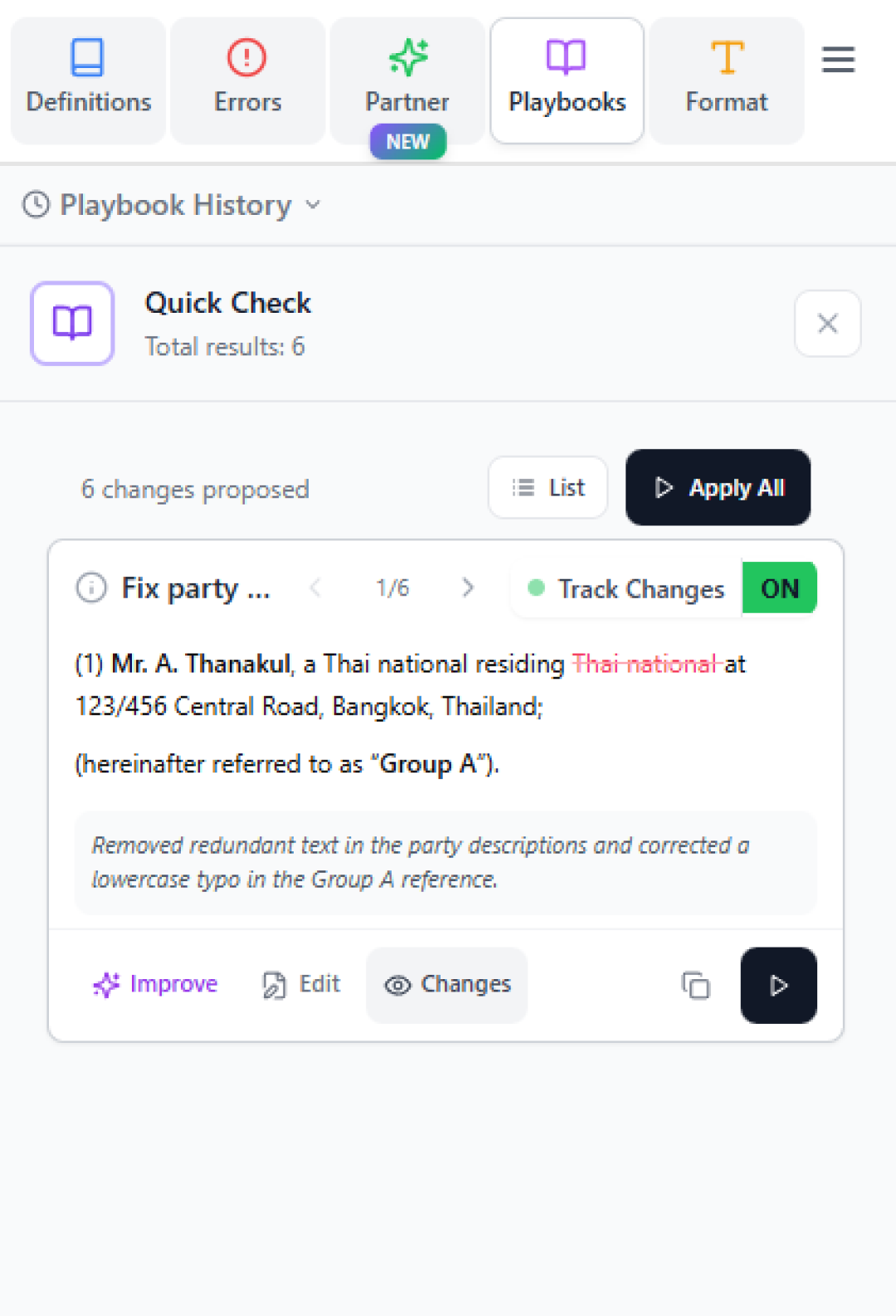

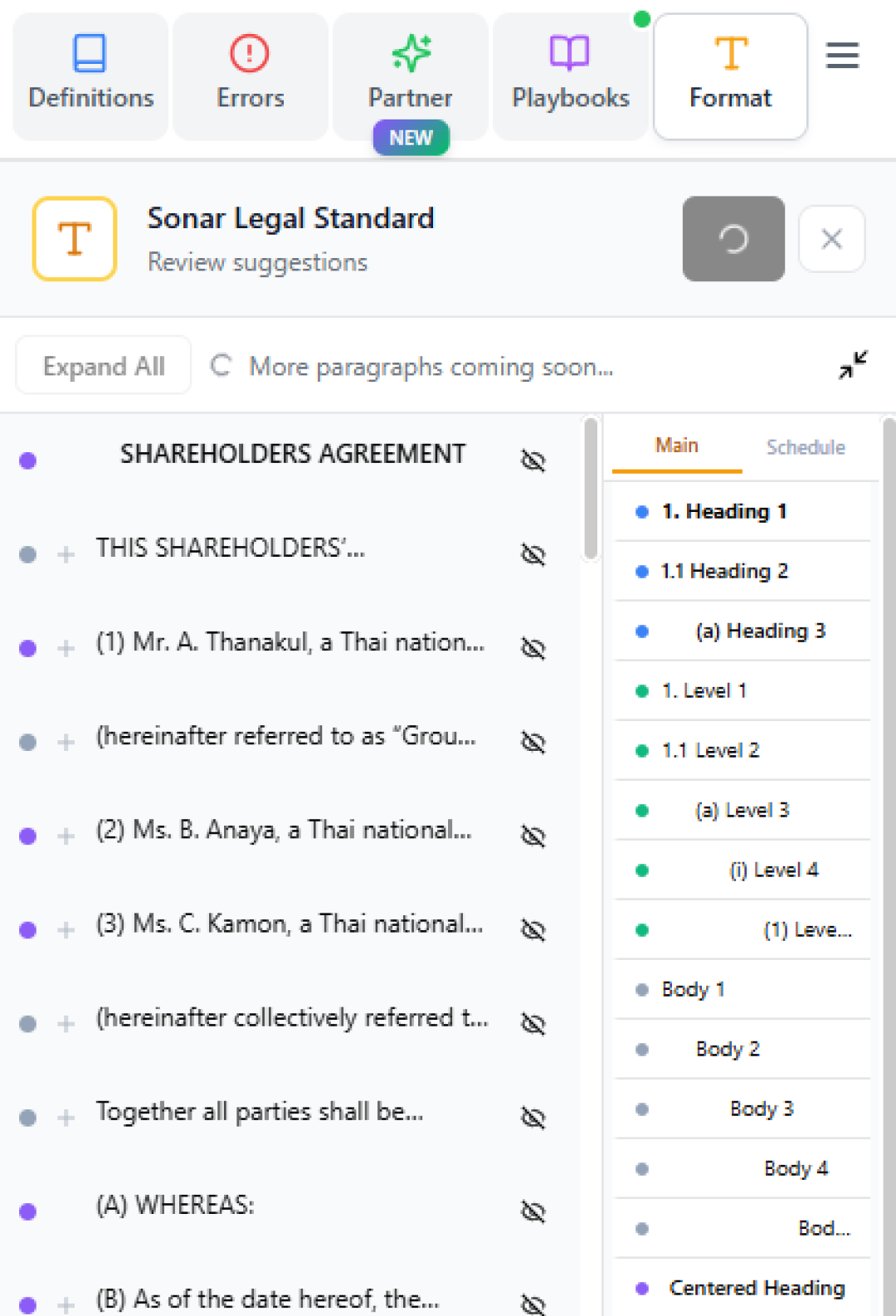

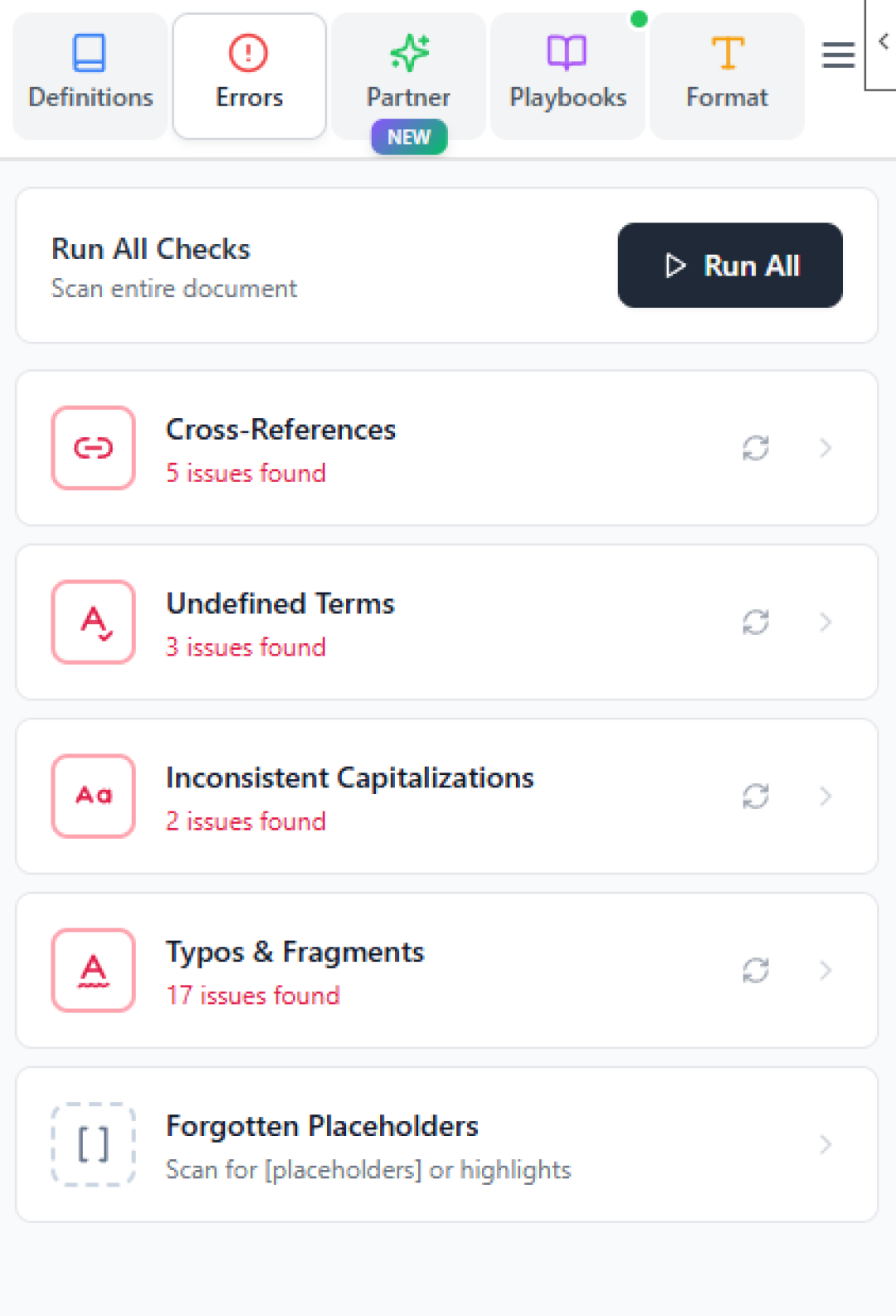

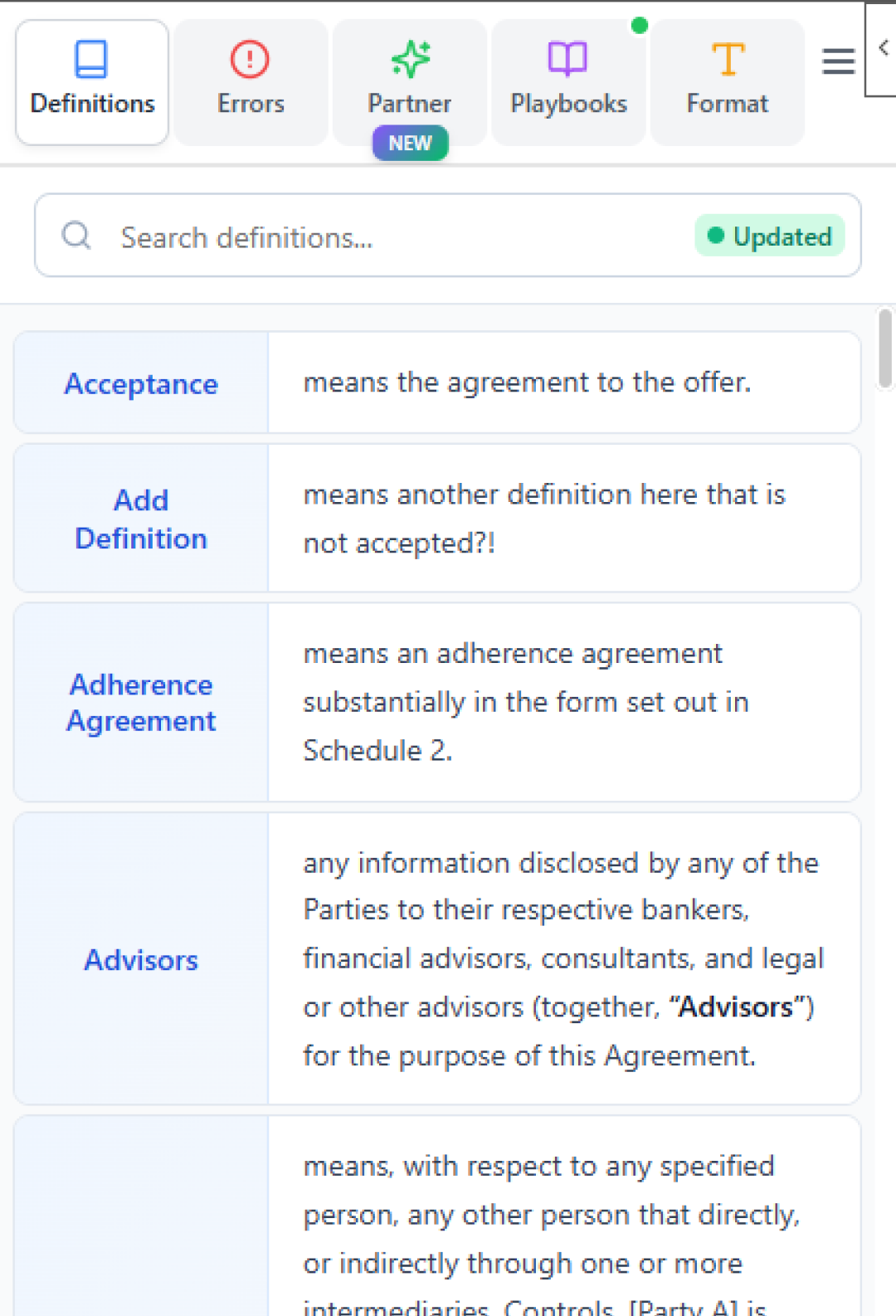

The core challenge was seamlessly integrating powerful LLMs with the OfficeJS API to enable direct document editing from the add-in side panel while preserving all formatting. I engineered efficient API usage patterns that don't slow down the Microsoft Word experience, designed the entire MVP UI, and evolved ideas into intuitive features that lawyers can use without extensive training. The system is review-based and copilot-focused—lawyers stay in control while AI assists.

Beyond the Word add-in, I'm building our new web app product featuring bulk document reviews with intelligent parsing, efficiently batched requests to fine-tuned LLMs for speed, live updates via SSE, and a legal research agent with deep understanding of Thai law that cites every answer for proper grounding. I built advanced RAG pipelines using PgVector and Jina embeddings with a hybrid approach, allowing customers to power the app's responses with their existing knowledge bases rather than blindly trusting the LLM's capabilities. I essentially took this from idea to money-making product, handling everything from architecture to deployment.

Impact

Idea → MVP → Prod

Full Journey

Multi-Model

Optimal LLM per Task

Enterprise

SSO & On-Prem

Always

Lawyers in Control

Technologies Used

AI & LLMs

RAG Pipeline

Backend

Frontend & Office

Infrastructure

Key Feature

Intelligent Document Workflows with Citations

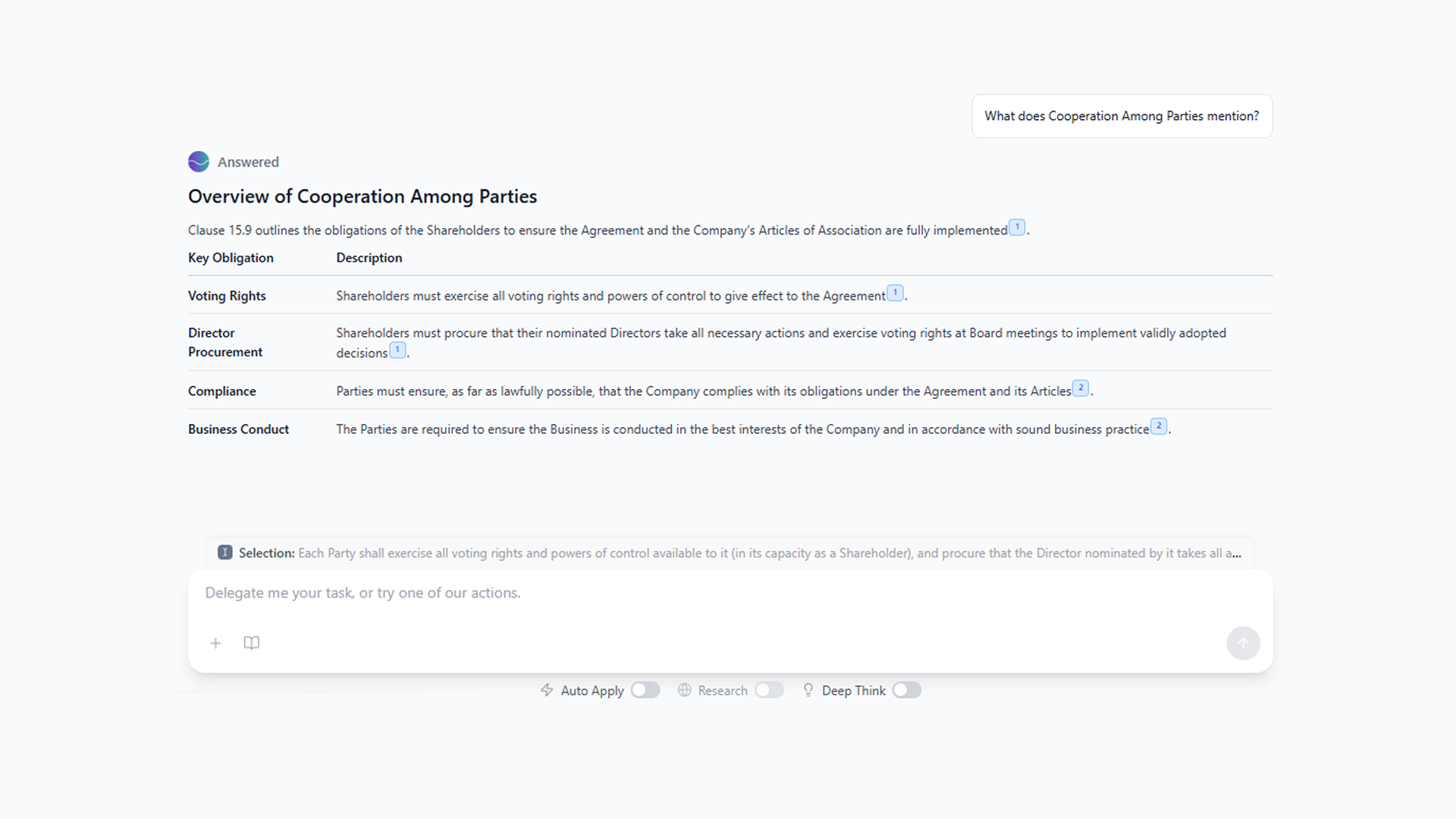

Built sophisticated prompt engineering to ensure LLM outputs have optimal structure, allowing seamless movement of content between documents and LLMs without breaking formatting or layout. Added citations to document Q&A workflows so lawyers can quickly traverse large documents and find what they need with confidence. Every answer is grounded and verifiable.

Key Challenges & Solutions

Seamless OfficeJS Integration

Integrated LLMs with the OfficeJS API to enable direct document editing from the add-in side panel while preserving all document formatting. Engineered efficient API usage patterns that maintain the smooth Microsoft Word experience users expect, without lag or interruption.

Intuitive UX Design

Designed the entire MVP UI and worked closely with legal partners to evolve ideas into functional, intuitive features that don't require extensive training. Made the system review-based and copilot-focused so lawyers are always in control rather than having AI act autonomously.

Smart Prompt Engineering

Engineered sophisticated prompts to ensure LLM outputs come with optimal structure, enabling seamless content movement between documents and LLMs without breaking formatting, layout, or content. This was critical for maintaining document integrity while leveraging AI capabilities.

Multi-Model Optimization

Combined LLMs from different providers, using the optimal model for every specific task instead of a one-size-fits-all approach. This strategy balances performance, cost, and accuracy across common error tracking, agentic editing, legal research, and document formatting features.

Enterprise-Grade Backend

Built a fast, robust API using Hono running on Bun, with Redis for caching and PostgreSQL with row-level security to ensure client data is always secure. Implemented rigorous testing with dev/staging/prod environments so the production system remains top-tier. Added SSO for enterprise customers.

Intelligent Bulk Processing

For the web app, built intelligent document parsing with efficiently batched requests to fine-tuned LLMs for great speed. Implemented live updates with SSE so users see progress in real-time. Designed the system to handle high-volume document reviews without sacrificing accuracy or performance.

Legal Research Agent

Currently building a legal research agent with deep understanding of Thai law that answers questions with proper citations. Every answer is grounded in actual legal sources, giving lawyers confidence in the AI's recommendations and enabling them to verify claims quickly.

Monorepo Organization

Used Turborepo to organize the codebase as a monorepo with reusable components, shared types, and efficient build caching. This architecture allows rapid development across the Word add-in, web app, and backend services while maintaining code consistency.

Observability & Analytics

Integrated LangFuse to track agent performance and continuously adapt workflows to user needs. Used PostHog with beta customers for A/B testing and analytics, enabling data-driven decisions about feature development and UX improvements.

Secure On-Prem Deployments

Set up more secure versions of the product for customers requiring on-premise deployments. Used Azure OpenAI instead of consumer-grade providers, ensuring enterprise customers can use the product while maintaining full control over their data and infrastructure.

Advanced RAG Pipeline

Built sophisticated RAG pipelines using PgVector for vector storage and Jina AI for embeddings, implementing a hybrid approach that combines semantic search with traditional filtering. This allows customers to power responses with their existing knowledge bases rather than relying solely on the LLM's training data, ensuring accurate, grounded answers.